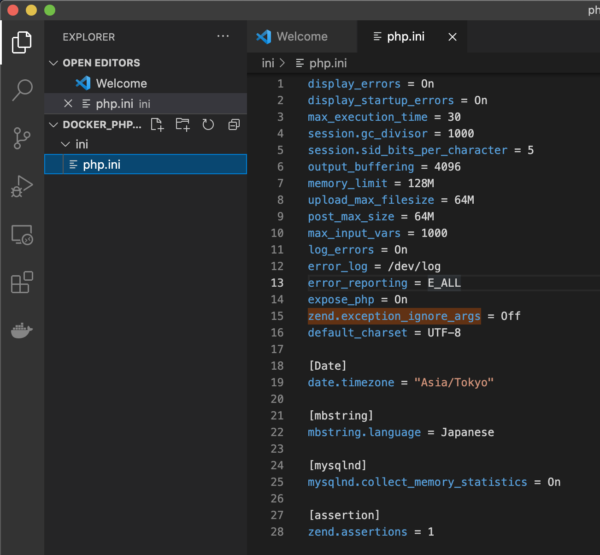

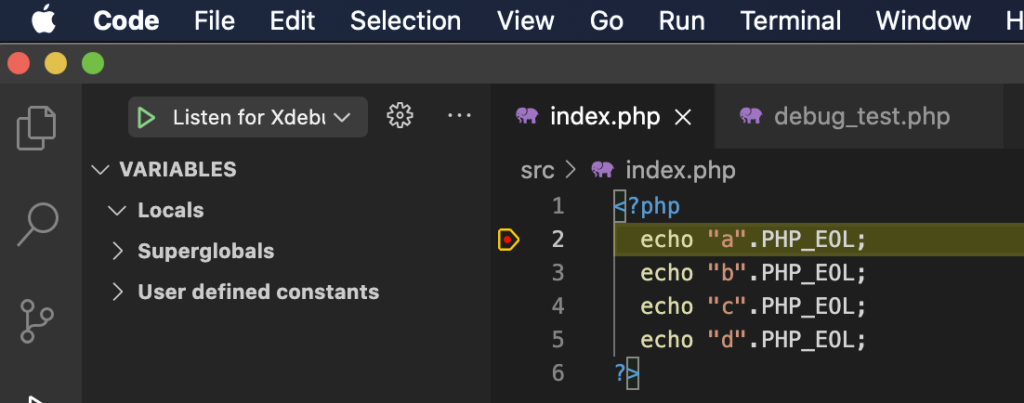

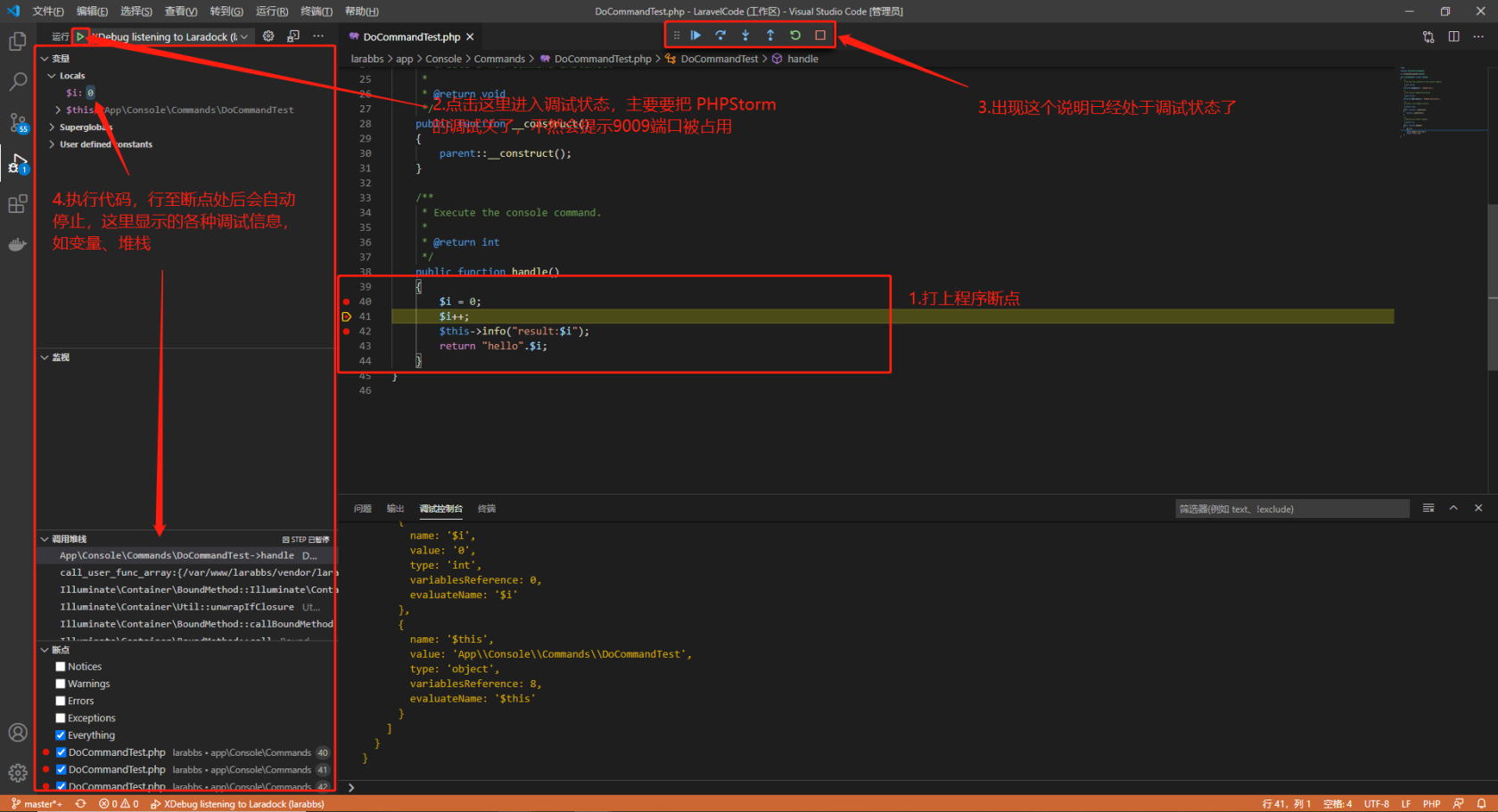

The first file, docker-compose-overwrites.yml, defines parts of our docker-compose.yml that we want to add or change when working with our container from Visual Studio Code. devcontainer folder (don’t miss the dot at the beginning). Now, we need to instruct Visual Studio Code on how to run our container. User: ' $' Configuring Project to Work in Docker The only change we introduce is the new image name, solely to keep one-to-one relation between an article and an image name. We’ll use the same Dockerfile and almost the same docker-compose.yml that we have used before. In the following examples, we are assuming that you chose the latter option. Alternatively, you can download this article’s source code. First, we need to install three extensions:Īfter installation, we can open our project (created in the previous article). We’ll use it to run and debug our Python service. You can find plenty of tutorials at the official website.

Thanks to its extensibility, it supports an endless list of languages and technologies. It is available for Windows, macOS, and Linux. We are assuming that you are familiar with Visual Studio Code, a very light and flexible code editor.

You are welcome to download the code used in this article. In this article, we’ll use Visual Studio Code to debug our service running in the Docker container. It allowed us to run our NLP models in a web browser. In the previous article of the series, we have exposed inference NLP models via Rest API using Fast API and Gunicorn with Uvicorn worker. This series assumes that you are familiar with AI/ML, containerization in general, and Docker in particular. In this series of articles, we explore Docker usage in Machine Learning (ML) scenarios. Container technologies, such as Docker, simplify dependency management and improve portability of your software.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed